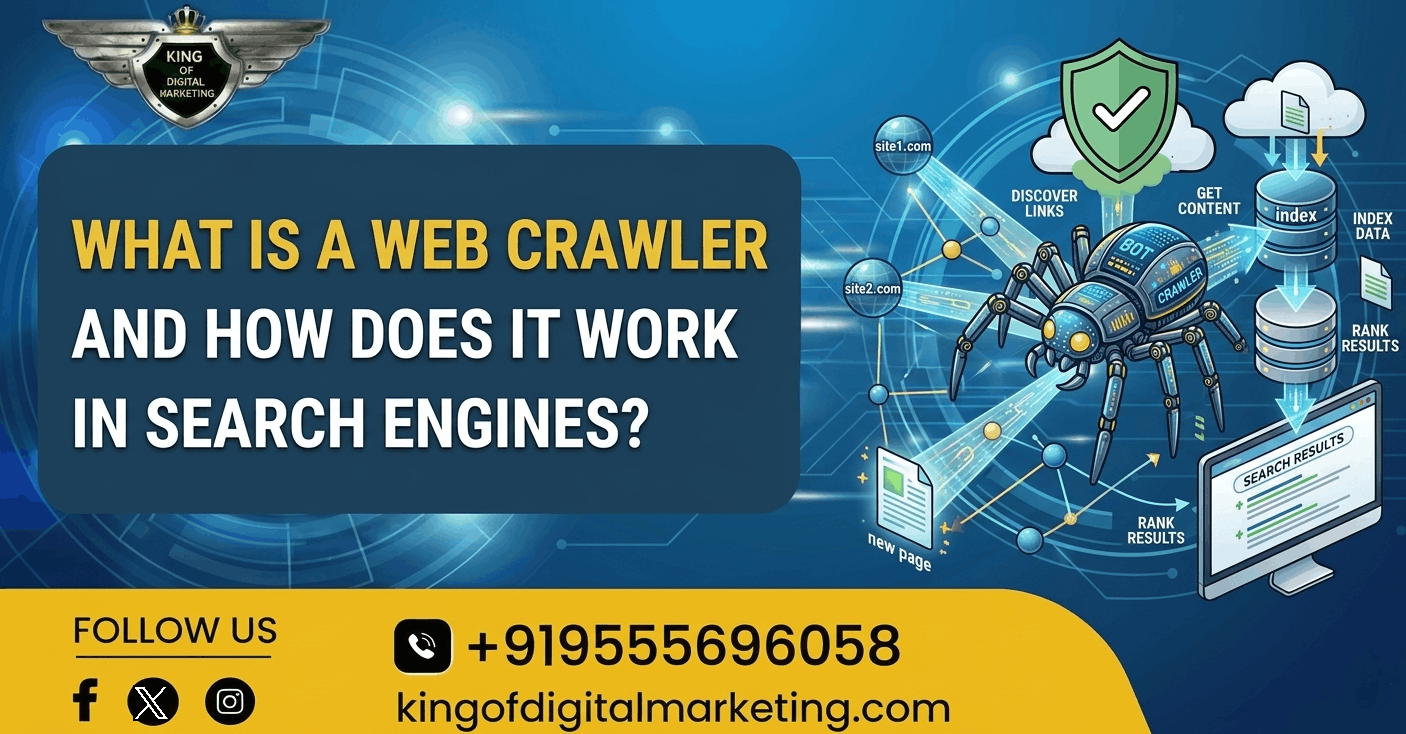

What is a Web Crawler and How Does It Work in Search Engines?

TABLE OF CONTENT

The internet now depends on search engines as its main fundamental system. The search engine produces matching web pages which number in the thousands within seconds after users enter their search query. Have you wondered how search engines go about finding and ordering billions of pages on the web? The answer lies in web crawlers.

Website owners and marketers and businesses need to understand web crawlers for their online visibility improvements. In this blog, we will explain what is web crawler in search engine, how it works, and why it plays a crucial role in search engine optimization.

What is a Web Crawler in Search Engine?

A web crawler is a tool designed specifically for this purpose by a search engine and could be instrumental in checking every page of the Internet for information. The program operates as a spider while people also refer to it as a bot or search engine crawler.

The main purpose of a crawler is to discover new pages, analyze existing pages, and send that information to search engine databases so they can appear in search results.

The web crawler functions as a digital librarian because it scans websites to read their content and store the information which search engines will later use.

Popular search engines use their own crawlers. For example:

- Googlebot serves as the web crawler for Google

- Bingbot serves as the web crawler for Bing

- Slurp Bot serves as the web crawler for Yahoo

Why Web Crawlers Are Important for SEO?

Web crawlers in SEO function as essential tools because search engines depend on them to find and evaluate website content.

The search engine cannot find your website if its crawler encounters problems accessing it. This reason explains why technical SEO uses advanced methods to enhance website crawlability.

The primary advantages that web crawlers provide include the following benefits.

- Web crawlers can find new web pages.

- Web crawlers allow search engines to update their database systems.

- Help search engines understand how websites organize their content.

- Web crawlers enable search engines to determine which keywords match website content.

- Search engines establish correct page rankings.

The SEO agency in Delhi provides professional services which help businesses in optimizing websites for maximum quality online visibility.

How Do Web Crawlers Work?

Web Crawlers operate through a specific process which requires multiple steps to complete. The crawling process begins with an initial stage which needs a set of known URLs to proceed.

1. Discovering URLs

The crawling process starts with a list of known URLs, These URLs are found at:

- Previously crawled pages

- Website sitemaps

- Submitted URLs

- Links from other websites

2. Following Links

The crawler accesses a webpage to examine all hyperlinks which the page contains. The links provide users with access to different internet sites.

The crawler uses the links to find more content on the internet. This process is often called spidering the web.

For example:

- Page A establishes a link connection to Page B

- Page B establishes a link connection to Page C

3. Sending Data for Indexing

The crawler sends collected data to the search engine indexing system after completing its information gathering.

The search engine stores the page in its extensive index database which contains all indexed pages. Only pages that are indexed can appear in search results.

Common Web Crawler Issues in SEO

Many websites face crawling problems that affect their search rankings.

The following issues represent common problems that websites experience:

- Blocked pages in robots.txt

- Duplicate content

- Broken internal links

- Poor website structure

- Orphan pages (pages with no internal links)

A technical SEO audit which an experienced SEO agency in Delhi will perform enables them to discover and resolve all current website issues thus enhancing their search visibility.

Conclusion

The most essential part of search engines operates through web crawlers which serve as their fundamental components. The system enables search engines to discover and analyze and index all web pages that exist on the internet. Understanding what web crawlers are in search engines and their working process enables businesses to enhance their website design while resolving technical problems and boosting their online presence.

Through website optimization for crawlers and implementation of SEO best practices businesses can achieve two goals which include reaching their correct audience while their search engine rankings will experience improvement.